“People can change, but only within bounds. In contrast, the present industrial system is dynamically unstable. It is organized for indefinite expansion and the concurrent unlimited creation of new needs, which in an industrial environment soon become basic necessities … Such growth makes the incongruous demand that man seek his satisfaction by submitting to the logic of his tools. The demands made by tools on people become increasingly costly … Increasing manipulation of man becomes necessary to overcome the resistance of his vital equilibrium to the dynamic of growing industries; it takes the form of educational, medical, and administrative therapies. Education turns out competitive consumers; medicine keeps them alive in the engineered environment they have come to require; bureaucracy reflects the necessity of exercising social control over people to do meaningless work. The parallel increase in the cost of the defense of new levels of privilege through military, police, and insurance measures reflects the fact that in a consumer society there are inevitably two kinds of slaves: the prisoners of addiction and the prisoners of envy.”

— Ivan Illich, Tools for Conviviality (1973)

Welcome to another installment of the Convivial Society. This time around some brief reflections on human beings entangled in technological systems.

You may have seen or heard about Elon Musk’s recent Neuralink demonstration involving some pigs, who weren’t altogether cooperative. Neuralink is the four-year-old Musk company working on a computer-brain interface. Musk claims that Neuralink technology will one day cure, among other ailments, blindness, paralysis, and mental illness. Additionally, Musk believes it will dramatically empower human beings by augmenting our mental and even physical capacities. In anticipation of the recent Neuralink event, his Twitter feed alluded to Matrix-like wonders to come. Musk also tends to tout this project as safeguard against the future threat of super-intelligent AI. It’s how we’ll keep pace with the machines. "With a high-bandwidth brain-machine interface,” Musk explained, “I think we can go along for the ride and effectively have the option of merging with AI.”

Needless to say, there is every reason to be skeptical about everyone one of those claims. As Antonio Regalado put it in his discussion of the event, it amounted to little more than neuroscience theater. That said, the hopes Musk has expressed are illustrative of a dynamic that has already been playing out in more prosaic everyday contexts. The nature of this dynamic becomes apparent when we ask a hypothetical question concerning computer-brain interfaces: Who is being plugged in to what? Or, to put it another way, who is the dominant partner, the computer or the brain? Are we plugging into a system that will serve our ends, or are we being better fitted to serve the interests of the technological system. I suspect that Musk would say the question misses the point, there is no dominant partner. Rather the relationship would be, as he put it, symbiotic. Clearly, he talks about it as if it will prove to be an enhancement of the human condition and one that will help us survive the threat of AI-induced obsolescence.

But we could just as easily imagine that the human interest will be superseded by the imperatives of the machine, that the person will be bent to the service and logic of the machine. There is ample precedent. When a system becomes sufficiently complex, the human element more often than not becomes a problem to be solved. The solution is to either remove the human element or otherwise re-train the person to conform and recalibrate their behavior to the specifications of the machine. Alternatively, society develops a variety of therapies to sustain the person who must now live within a techno-economic milieu that is hostile to human flourishing.

In other words, the end being served is not human flourishing, it is the functioning of the technological system. Musk’s rationale for Neuralink is just an overhyped case in point of this logic: the answer to the problem posed by technological systems that have grown dangerous is not to reconsider the advisability of building such systems, rather it is to further technologize the human being so as to assure survival in a technological milieu that has grown fundamentally hostile to human well-being. It clearly recalls the instinct to solve a crisis by escalation, which Ivan Illich identified in Tools for Conviviality.

In the same work, Illich wrote,

There are two ranges in the growth of tools: the range within which machines are used to extend human capability and the range in which they are used to contract, eliminate, or replace human functions. In the first, man as an individual can exercise authority on his own behalf and therefore assume responsibility. In the second, the machine takes over—first reducing the range of choice and motivation in both the operator and the client, and second imposing its own logic and demand on both.

Insidiously, these developments are typically packaged as either matters of convenience or liberation. But the promises never materialize, in part, because they veil a greater entanglement in systems and institutions that are ultimately designed to serve their own ends. In part, also, because it is never clear what exactly we are being liberated for other than further consumption of the products/services/goods offered to us by the techno-economic systems. It was a dynamic eloquently described by Lewis Mumford when, in the mid-twentieth century, he asked “Why has our age surrendered so easily to the controllers, the manipulators, the conditioners of an authoritarian technics?” Here is his answer:

The bargain we are being asked to ratify takes the form of a magnificent bribe. Under the democratic-authoritarian social contract, each member of the community may claim every material advantage, every intellectual and emotional stimulus he may desire, in quantities hardly available hitherto even for a restricted minority: food, housing, swift transportation, instantaneous communication, medical care, entertainment, education. But on one condition: that one must not merely ask for nothing that the system does not provide, but likewise agree to take everything offered, duly processed and fabricated, homogenized and equalized, in the precise quantities that the system, rather than the person, requires. Once one opts for the system no further choice remains. In a word, if one surrenders one’s life at source, authoritarian technics will give back as much of it as can be mechanically graded, quantitatively multiplied, collectively manipulated and magnified.

The temptation, in other words, has been to assume that the goods we are offered by the current techno-social regime are the goods that we, in fact, need to thrive as the sort of creatures we are. This is why any serious consideration of the questions raised by technology must eventually become a consideration of what it means to be human and what shape a just society should take. These are, of course, political questions of the first order, or at least they used to be until they were superseded by the imperatives of economic growth.

A few years ago, I suggested that there was a tradition of humanist technology criticism worth engaging. I contemplated at one point drawing up a proposal for something like a humanist tech criticism reader. Maybe someday something will come of that. The general idea that holds this tradition of critics together is the conviction that some account of what people are for and of the conditions under which they flourish should inform our evaluation of technology. I recognize now as I did then that this can be contested and contentious territory, but I fear that unless we figure out how to at least raise these questions we will proceed down a path toward de facto post-humanism.

At the end of my reflections a few years back, I suggested that a humanist critique of technology entails a preference for technology that (1) operates at a human scale, (2) works toward human ends, (3) allows for the fullest possible flourishing of a person’s capabilities, (4) does not obfuscate moral responsibility, and (5) acknowledges and respects certain limits inherent to the human condition.

I leave you with those observations today. I trust that they can at least be the point of departure for productive conversations.

News and Resources

Are we already living in a tech dystopia? A few scholars respond to the question, including Jonathan Zittrain and David Golumbia.

Repurposing Gibson’s well-known quip, I suggested some time ago that the dystopia is already here — it’s just not very evenly distributed.A short but suggestive post from Drew Austin revisiting McLuhan’s 1967 claim, “the city no longer exists except as a cultural ghost for tourists” in light of the pandemic:

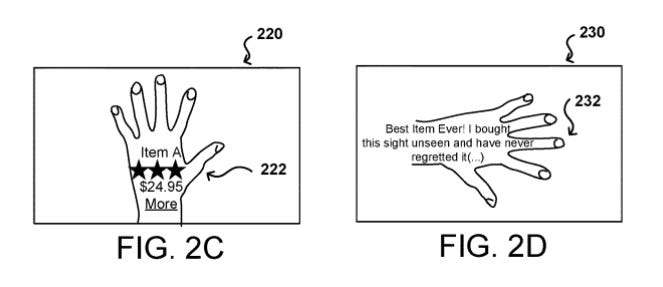

“… the city might be a ‘cultural !ghost for tourists’ to a public intellectual like McLuhan even as it remains a very real and immediate place for many others—those who are still ‘from somewhere.’ To Martínez, the ‘Zoom class’ is currently accelerating headlong into the dubious future of entirely rootless, technology-enabled nomadism and increasingly separating themselves from the people who must remain physically and culturally rooted in a specific somewhere.”A review of recent Big Tech patents. Two of note from Amazon: using augmented reality to put ads on your body and drones for home surveillance.

Consider Hapbee, a wearable device that promises to “replicate different feelings by playing safe, low energy magnetic signals.” The feelings it purports to stimulate are “Happy, Alert, Pick Me Up, Relaxed, Calm and Sleepy.” Hapbee is paired with an app that allows you to “play your feelings any time, any where.” Spotify, but for your emotional life. That’s not a bad line, come to think of it. It is true, of course, that we do use Spotify (and much else, of course) to tune our emotional life. Considering, for arguments sake, that Hapbee delivers on its promise, is it really all that different than a mood enhancing playlist, or cup of coffee in the morning? At issue is whether or not means to an end are indifferent and interchangeable. The presumption that they are lies deep at the heart of technological culture. But the presumption, at least in some cases, especially cases of moral significance, is wrong. There’s the old distinction between goods that internal to a practice and those that are external to it. The latter of these we might think of as mercenary in nature. If I pursue a friendship for the sake of mutual affection and companionship, I’m pursuing the friendship for the sake of a good that is internal to the practice of friendship. If I pursue it for the sake of social capital that the friendship will bring me, I am pursuing it for goods that are external to the practice. And, of course, pursued in this way, the true good is never attained. With regards to less morally fraught ends than happiness, alertness say, a similar dynamic applies. What range of practices, what form of life, is conducive to a state of alertness or calm? Am I conducting myself, by choice or coercion, in such a way that I cannot live in a manner that may allow for calm or alertness? Does the device merely allow me to continue functioning in a self-destructive manner then? Not unlike how caffeine may allow me to remain marginally productive while getting inadequate sleep so that my longterm health is jeopardized? Thus concludes this brief and incomplete exercise in moral reasoning.

From a 2019 post by Alan Jacobs that I just recently stumbled on again:

“Facebook is the Sauron of the online world, Twitter the Saruman. Let’s rather live in Tom Bombadil’s world, where we can be eccentric, peculiar perhaps, without ambition, content to tend our little corner of Middle Earth with charity and grace. We’ve moved a long way from Tim Carmody’s planetary metaphor, which, as I say, I feel the force of, but whether what I’m doing ultimately matters or not, I’m finding it helpful to work away in this little highland garden, above the turmoil of the social-media sea, finding small beautiful things and caring for them and sharing them with a few friends. One could do worse.”Shannon Vallor on what the pandemic has revealed: “The lesson of COVID-19 is that scientific and technical expertise stripped away from humane wisdom—social, moral and political knowledge of what matters, what we value, what needs preserving, repairing and caring for together—is a mere illusion of security. It’s an expensive life raft lacking rations, a compass, a map or a paddle. It cannot carry us safely into the futures we all need to build, for our nations, humankind and for coming generations of life on this planet.”

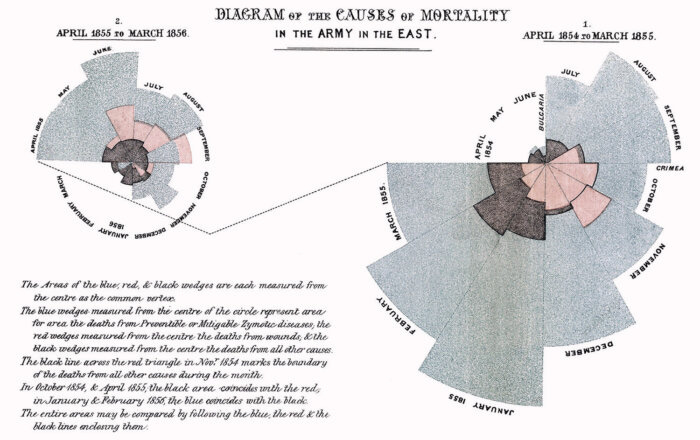

From “A History of Early Public Health Infographics,” an excerpt from Murray Dick’s recently published book, The Infographic:A History of Data Graphics in News and Communications:

“From 1820 to 1830, an enthusiasm for statistics began to emerge across the western world, leading to an era of statistics concerned with reform. It was led by individuals who sought to disrupt what they saw as the chaos of politics and replace it with a new apolitical regime of empirical, observed fact. This new approach would come to be seen as a field of action, as an applied science, providing empirical weight to the new, intellectually dominant spirit of political economy.”

Sarah Hendren on the tyranny of chairs, adapted from What Can a Body Do? How We Meet the Built World: “For most of human history, a mix of postures was the norm for a body meeting the world. Squatting has been as natural a posture as sitting for daily tasks, and lying down was a conventional pose for eating in some ancient cultures. So why has sitting in chairs persisted in so many modern cultures?”

Re-framings

— “A Bright Field” by the Anglo-Welsh poet R. S. Thomas, who died in 2000. I encountered the poem in Jeffrey Bilbro’s wonderful essay on Thomas, “Turn Aside

The Poetic Vision of R. S. Thomas”:

I have seen the sun break through

to illuminate a small field

for a while, and gone my way

and forgotten it. But that was the pearl

of great price, the one field that had

the treasure in it. I realize now

that I must give all that I have

to possess it. Life is not hurryingon to a receding future, nor hankering after

an imagined past. It is the turning

aside like Moses to the miracle

of the lit bush, to a brightness

that seemed as transitory as your youth

once, but is the eternity that awaits you.

As I was reading Jeff’s essay and preparing to type these lines, I heard the door of my daughters’s room open. It’s late. They should be sleeping. It was not a welcome sound. I grumbled to myself about getting this newsletter done. Then my oldest stumbled bleary-eyed into my room, crawled on top of me, and lay her head on my chest, so naturally I, altogether chastised, turned aside to take in the light of that moment.

— From Ursula K. Le Guin’s Always Coming Home, quoted by Alan Jacobs in “Handmind in Covidtide,” which is worth your time:

He thought of very little besides clay, and shaping, and glazing, and firing. It was a good thing for me to learn a craft with a true maker. It may have been the best thing I have done. Nothing we do is better than the work of handmind. When mind uses itself without the hands it runs the circle and may go too fast; even speech using the voice only may go too fast. The hand that shapes the mind into clay or written word slows thought to the gait of things and lets it be subject to accident and time.

The Conversation

Alright, so I didn’t quite get this to you in August. Nonetheless, consider this installment the second of last month’s. Two more to come in September. Is it really September? I’m glad for it. It’s the time of year that I start longing for the first cool breeze to signal the end of the Florida summer. It won’t come for a while yet, but I’m eagerly waiting.

Cheers,

Michael